Flexible

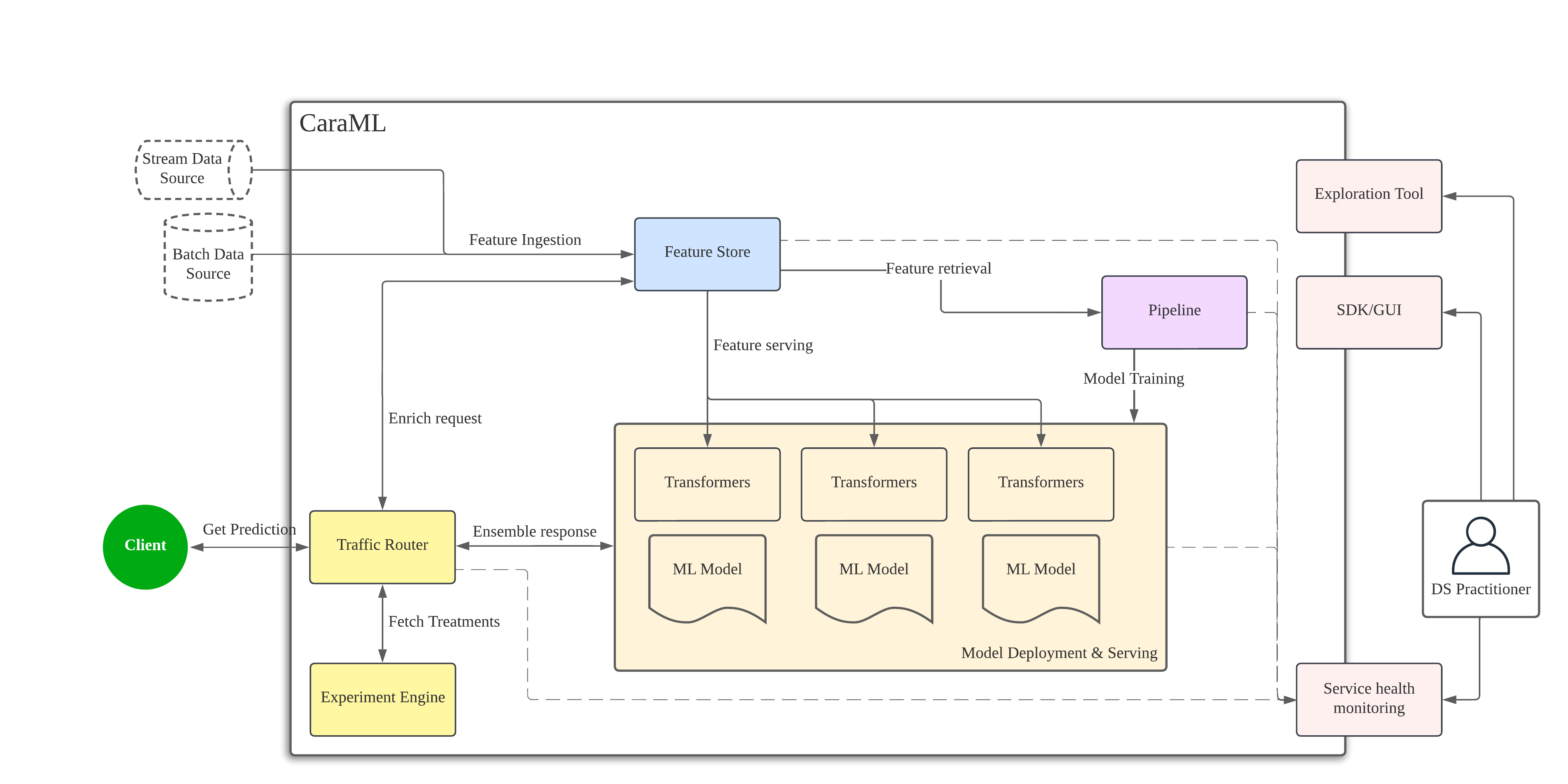

Composable platform where you can pick products and features according to your need.

Enable your data science solutions to run at scale without needing engineering expertise.

CaraML is an open source Machine Learning Operations (MLOps) platform that help data science teams to increase development velocity and make production operations as simple as possible.

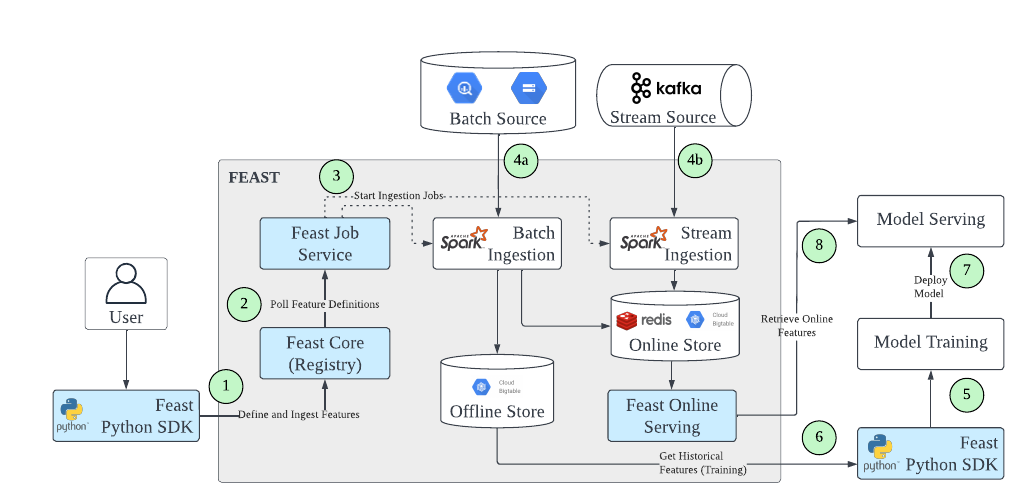

Store and serve your features in production.

Operationalise your analytics data

Ensure consistency across training and serving

Reuse your current infrastructure

Standardize your data workflows across teams

Deploy and serve your models in production with ease.

Self-serve: First-class support for Python and a Jupyter notebook-first experience.

Fast: Less than 10 minutes from a pre-trained model to a web service endpoint, allowing for fast iterations.

Scalable: Low overhead, high throughput, able to handle huge traffic loads.

Cost-efficient: Idle services are automatically scaled down.

.png)

Orchestrate traffic routing rules and online experimentation for your prediction workflow.

Fast: Supports arbitrary pre-processors and dynamic ensembling of models for each treatment.

Extensible: Supports arbitrary pre-processors and dynamic ensembling of models for each treatment.

Scalable: Automatically scales up and down (based on a traffic volume) to maximize a throughput and minimize infra bills.

Self-serve: Eliminate engineers from the loop of running an experiment.

.png)

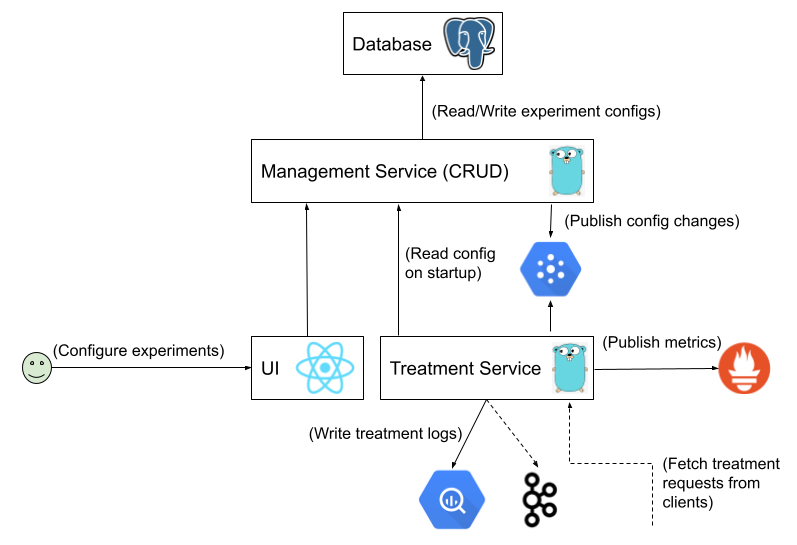

Design and manage experiment configurations in a safe and holistic manner with CaraML experiment engine - XP

Reliable - Inherent fault-detection rules help create experiments without conflicts

Customizable - Every service has unique requirements. XP allows for defining flexible segmentation and experiment validation rules.

Fast - 99p server-side latency (excluding the network latency between the calling service and XP) averages around 1 ms

Observable - Resource utilization, treatment assignment and performance metrics are available

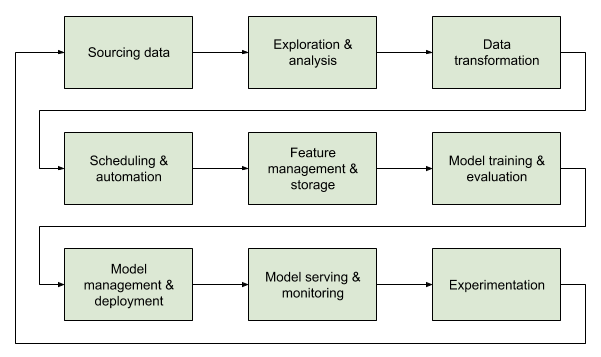

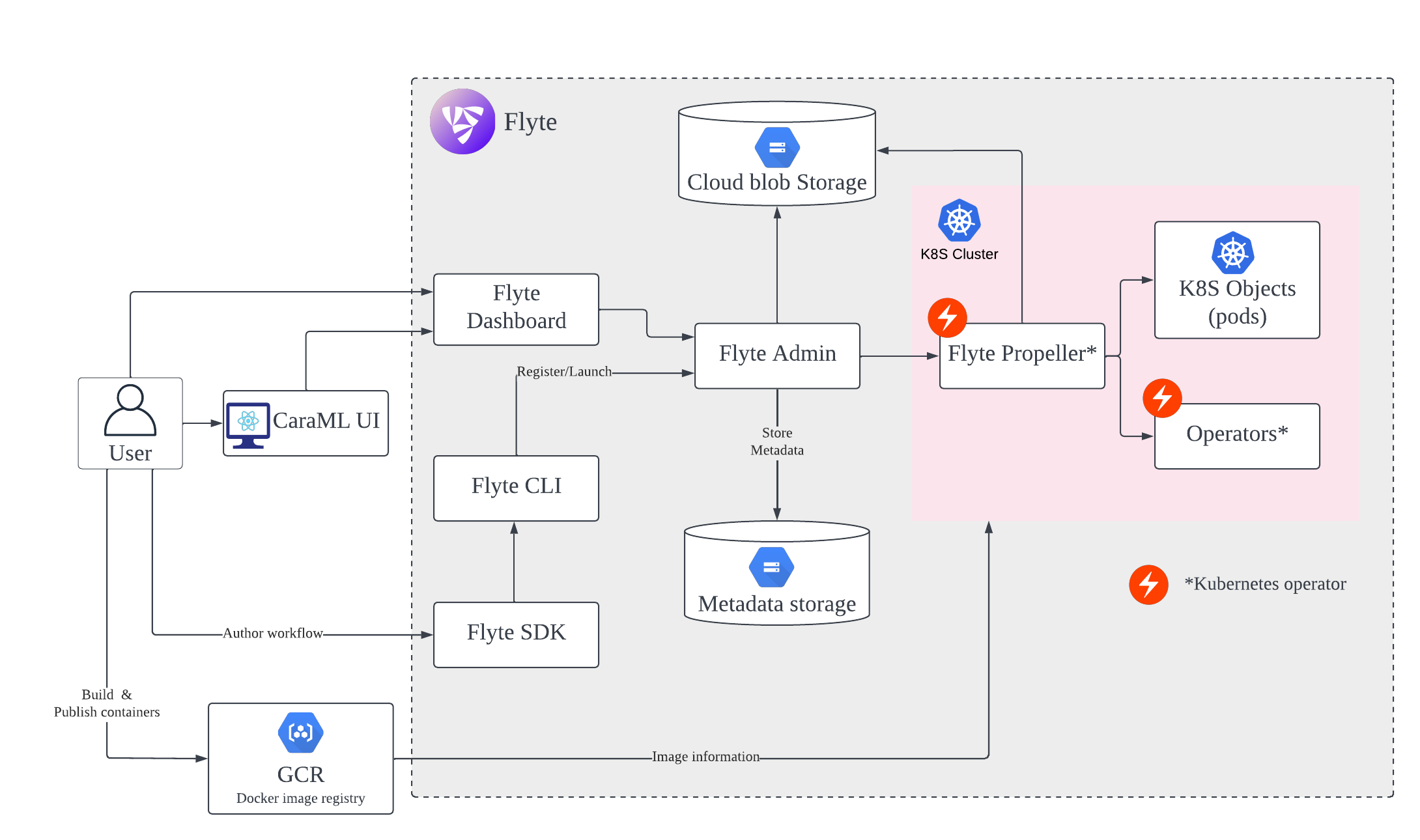

Automate your machine learning deployment and training workflows

Data-awareness: Support data validation and quality check at task level, provide data type checks out of box

Fast dev experience: Support project templating with boilerplate code, test and run locally for fast feedback cycle

Reusable Components: Package common operations to share across teams and standardise workflows.

Workflow versioning: Version your workflow for easier debugging, rolling back and comparing outputs

Composable platform where you can pick products and features according to your need.

Battle-tested systems that is already serving production traffic in real life.

Cloud native designs that are easily scalable.